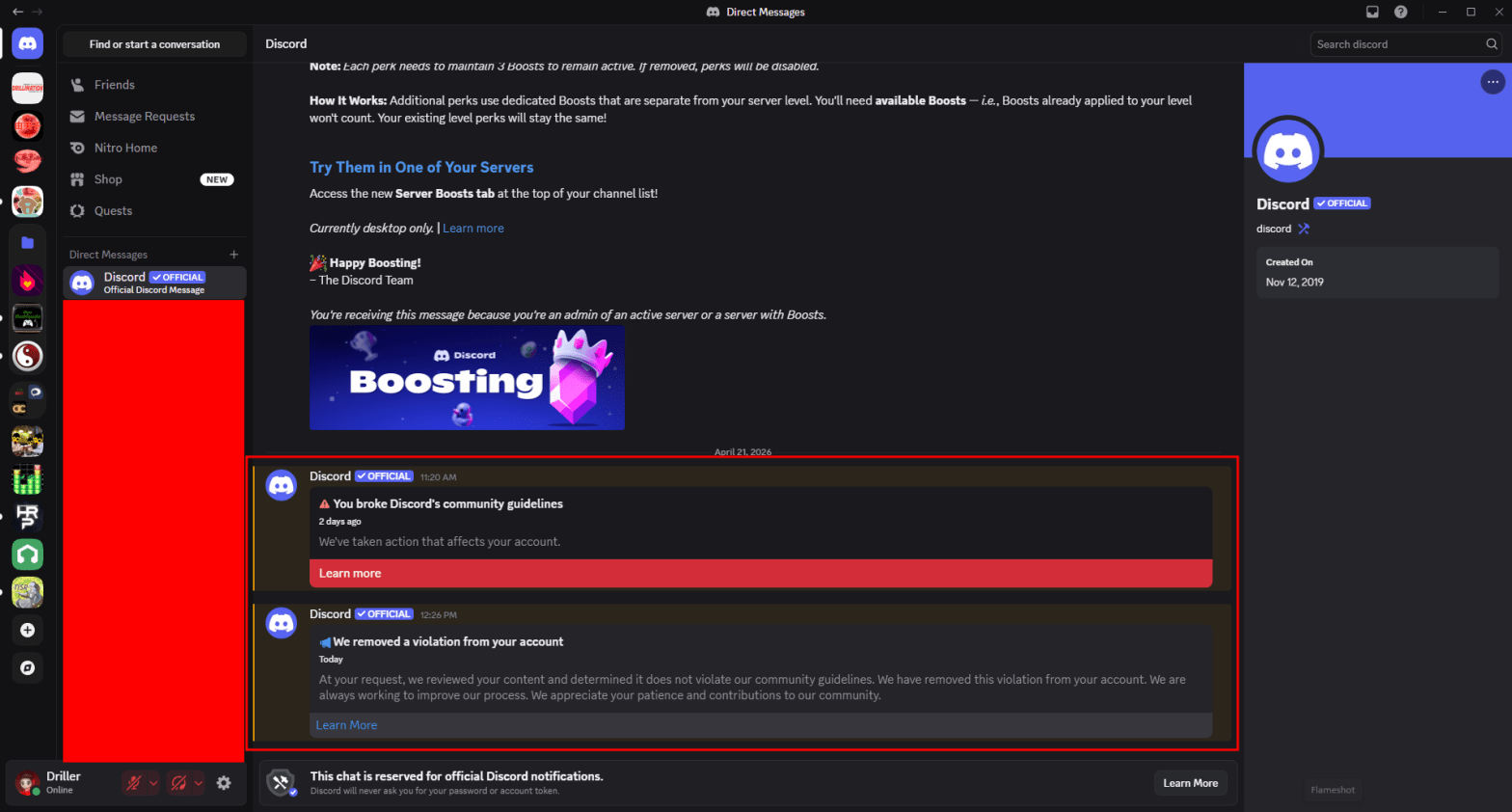

On the morning of Tuesday, April 21, 2026, at around 11AM EDT, while working on design documents for their next project after Touhou Meishuugeki ~ Cursed Sweeper and Bewildering Parallel, the Prophet Driller of Drillimation Systems encountered something very alarming in his Discord inbox: a community guidelines violation over something he did not commit. A message he made simply consisted of two emojis and was flagged for “hateful conduct”, when none of the emojis contained anything that would constitute a violation. What happened?

The Prophet subsequently appealed, and in less than two hours, Discord wiped the violation from his account standing as the messages did not contain any hateful content. This was not the result of a cyberattack. Unfortunately, the flagged messages were removed from the platform. The Prophet started using Discord in November 2016, but didn’t start using it often until early 2019, and never in the past decade had he encountered something like this. This represents a growing trend where social media accounts are being increasingly suspended, even without policy violations from the fact that AI is now enforcing a site’s community guidelines.

Systems powered by machine learning and AI are not perfect and can mistakenly flag content that may seem innocent. These AI systems, designed to detect spam or policy breaches (such as safety or copyright), often misinterpret context, resulting in wrongful bans. Affected users can file in-app appeals, but no matter how long the bans are, whether temporary or permanent, their appeals are instantly rejected because no human reviews them.

Managing a moderation team for a website is expensive, and companies sometimes outsource this work to third-party vendors or use artificial intelligence to lessen the workload and save money. There are several reasons why accounts can get wrongfully flagged:

- AI systems can mistake harmless photos, such as beach vacation images as sexual exploitation or other serious violations.

- Automated tools prioritize pattern recognition over human understanding, causing innocent content to look suspicious.

- A high increase in activity, such as using third-party bots or having high follower spikes, can trigger automated spam detection.

- Faulty AI has resulted in wrongful suspensions for intellectual property, child safety, or community standard violations when a user did not violate them.

There are several steps that users can take if they are wrongfully flagged.

- Users have the opportunity to appeal in-app. Unfortunately, this process is also powered by AI, leading to quick, automated rejections.

- Some users have reported success by getting human support, though this is not a guaranteed fix.

- If nothing else works, pursuing legal action would be their last option. Users based in the United States can file a complaint with a state attorney general’s office, and it has helped some users regain access. If that doesn’t work, arbitrating a decision would be a user’s last resort, though it takes a lot longer.

When an account is locked, users can lose access to their data. Business owners such as the Prophet of Drillimation Systems can face income loss due to a suspension of a professional account.

While AI is a necessary tool for managing the sheer scale of modern social media, it cannot be the final judge and jury. An incident involving a two-emoji violation highlights a critical flaw: context blindness. For a community to thrive, platforms must invest in robust human oversight. An appeals process that is handled by the same flawed logic that issues an initial violation isn’t a solution; it’s a closed loop.

As a user, your best defense is staying informed of your rights and maintaining backups of your digital assets. For developers and community leaders, it is more important than ever to advocate for transparency in how these automated systems operate. In a digital world built on communication, AI enforcement is inherently inhumane. If we allow algorithms to silence users over harmless emojis or misinterpreted patterns, we risk losing the very thing that makes these platforms valuable: authentic connection.

If the Prophet loses his Discord account, not only would we lose one of the main platforms that we communicate with our fans on, but our Discord server would also go away. AI should be a shield that protects communities, not a sword that strikes down innocent creators.

Discover more from Drillimation Systems

Subscribe to get the latest posts sent to your email.

Interesting read,

LikeLike